A guest blog by Tavi Brandenburg, with Hyrum Booth and Beth Loudon

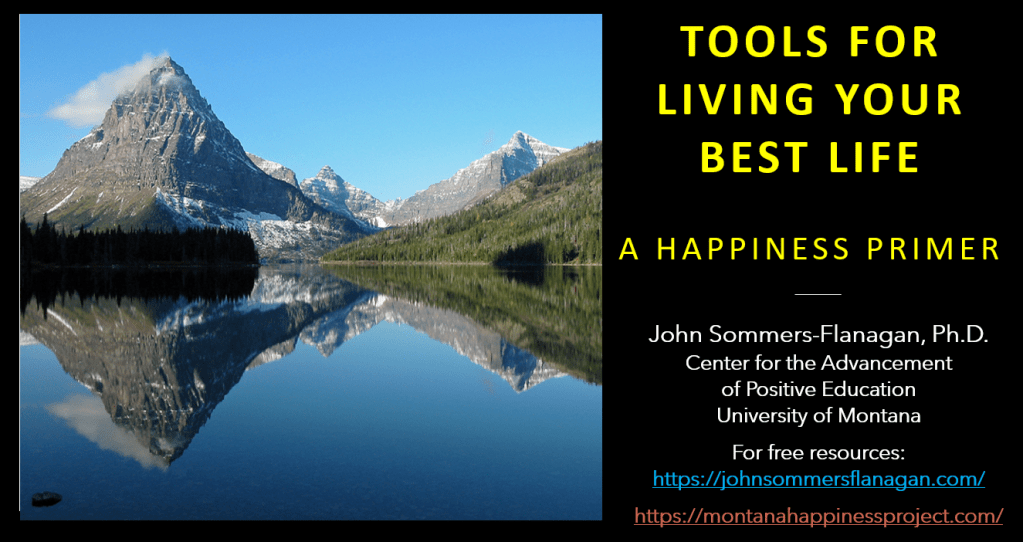

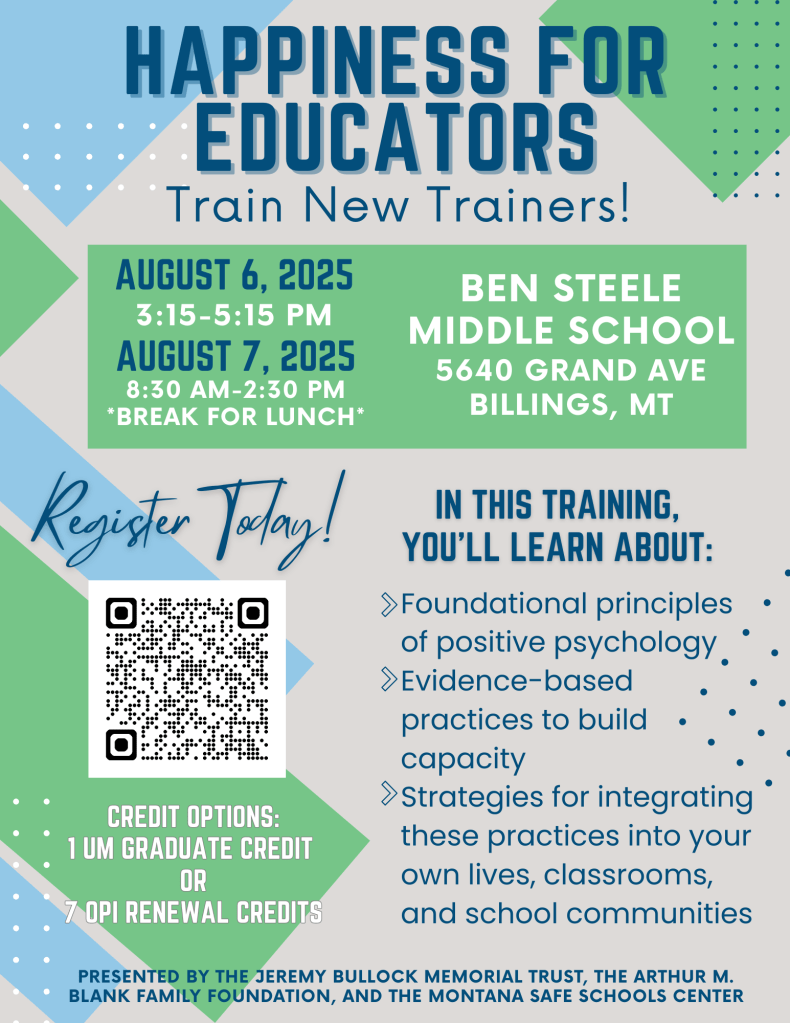

**JSF Note: Below you’ll find a guest blog piece from Tavi Brandenburg. Tavi is a doctoral student in Counseling and Supervision at the University of Montana. The blog piece is a summary of themes she, Hyrum, and Beth (two more doc students), derived from a qualitative study of educator responses to our Happiness for Educators course. The themes are in Bold. As you may know, the HFE course is a 3-credit asynchronous course offered through the University of Montana. I hope you enjoy this “Amazing” summary. I am grateful to Tavi, Hyrum, and Beth for their support of our HFE course.**

For the past several months, Hyrum Booth, Beth Loudon, and I (Tavi Brandenburg) have been working on many levels of the Evidence-based Happiness for Educators Course (COUN 591) offered through CAPE & UM. We had the good fortune to share a bit about the course and our findings at the Association of Counselor Educators and Supervisors in Philadelphia. Here’s a link to the presentation slide deck:

Hyrum and I conducted 38 interviews. We enjoyed immersing ourselves in the interview transcripts to make sense of what the participants shared. We asked questions about the lasting impressions of the course, how the participants applied and continue to apply the evidence-based strategies presented during the course, and we asked about the lasting effects of the course on their personal and professional lives. While not always simple and straightforward, the qualitative results–the stories we collected–indicated positive impressions of the course, continued application of the strength-based strategies, and positive ongoing effects personally and interpersonally. And it was much more than that; the participants shared stories that illustrate deep fundamental shifts in personal wellbeing that have lasted over time. The course caused a ripple effect.

As with any ripple effect, there is a catalyst for change–a pebble, a stone, a boulder–for Montana Educators. This course acted as a catalyst for change, sending participants in the direction of introspection and personal development. Most participants experienced some level of dissonance due to discrepancies between their motivations for signing up for the course, including movement on the pay scale, inexpensive continuing education credits, preconceived notions of the course or content based on what they had heard from others. This dissonance was also related to course structure, expectations, and the responsive feedback they received. The personal nature of the course caught many off guard. The flexibility offered in the course design provided autonomy and allowed participants to select evidence-based practices that were meaningful to them rather than progressing through a set of prescribed exercises that may not resonate. This dissonance also came up for folks when the content felt too close to home. When participants experienced personally challenging life events and the course simultaneously, they tended to have strong reactions to either the material, the activities, the pace, or amount of material in the course. As a result of the dissonance they experienced, participants tended to give themselves permission for self-care, which allowed greater ‘flow’ within their professional and personal lives. Additionally, some participants described wide ranging content from more approachable concepts like developing tiny habits, to emotionally challenging exercises like experimenting with forgiveness. For some, the breadth of the content coupled with the pace of the course felt too much at once.

While engaging with the content, participants experienced vulnerability. Depending on the level of depth participants allowed themselves to go while completing the evidence-based practices, vulnerability challenged participants’ sense of comfort; it is not always easy to look inward. Vulnerability also contributed to meaningful connections with family members, colleagues, and students. Vulnerability led to increased self-awareness and other-awareness, and empathy.

Participants articulated being able to be more mindfully present in their daily tasks ranging from doing the dishes, to grading papers, to the quality of engagement with loved ones and learning communities. Vulnerability and presence gave way for some to take important, life-changing steps in their lives. For some, this meant increased ability to set boundaries around work or areas in their lives that they historically struggled to say ‘no,’ allowing these folks to maintain energy for themselves and their own wellbeing or a deepened state of connection with loved ones. Several participants took radical steps to prioritize themselves by leaving relationships or K-12 education. These folks specifically stated the course did not cause these substantial changes, rather the course illuminated a way of being that was more in line with how they would like to live their lives and they had the presence of mind to execute momentous changes.

Another ripple created by taking the courses includes the development and/or reinforcement of a wellbeing toolkit that they used for themselves and shared with loved ones and learning community members. Many participants took evidence-based exercises from the course and directly and intentionally applied them in their lives, with their families, with their students, and in their learning communities. Some teachers reported marked improvement in the classroom community, noting that students were more engaged in their learning, more empathic with others, more willing to take intellectual risks (be vulnerable). Many participants noted incorporating gratitude into their daily lives at home, and at school. Often professional development is a ‘top down’ experience, leaving teachers struggling to connect with the ‘why’ of new practices. This course, as professional development, had the opposite effect. Teachers applied the practices and developed a wellbeing toolkit that worked for them, leading them to deeply know the importance of strength-based practices. The autonomy created by the course structure allowed for creativity, authenticity, and agency in how educators incorporated the material into their personal and professional lives. Educators incorporated strengths-based concepts into a variety of subject areas, from Special Ed to Ed. Leadership to all levels and subjects in the classroom, to School Counseling, and beyond.

Participants felt like this course and content was so meaningful they advocated on many levels, took on leadership with the content (arranging guest speakers, joining John to advocate for CAPE, sharing the fliers with colleagues, family members also in education, whole districts). Some advocated at the district leadership level by advocating for trickle down happiness. We know that students are happier when their teachers are happier. The connection with others through the course helped educators feel less professionally isolated. This sense of connection led many participants to advocate for the course and improve mental health for others in the community.

We interviewed participants who had taken the course anywhere from four months to about one and a half years prior to the interview. While many participants stated they use their wellbeing toolkit as needed, especially as they encounter new challenges, we also heard participants refer to their notes during the interview, wishing they had periodic reminders about the content; many kept their folders handy. This indicates a quality of evanescence. Participants’ connections with the content faded over time, and there seemed to be genuine interest in a second level, or some means of continuing to regularly connect with the content.

We sought to understand the lasting effect, the applications, and the lingering memories participants had from the course. What we found, however, was a ripple of themes that illustrate the deep and meaningful change that is possible when people are provided with strengths-based information and an invitation to engage in self-reflective activities; the participants connected with agency resulting in improved mental and physical health. Amazing.